Execution Phases of Use Case Tool

Detailed breakdown of the execution phases in the Qubitz AI multi-agent research pipeline.

Phase 1: Initialization

The system establishes connectivity and plans the research strategy.

[SYSTEM] → Connecting to use case tool...

[AGENT: RESEARCH] → Planning the research strategy and subtasks...

[SYSTEM] → Establishing secure connection...

Phase 2: Initial Research

The AGENT: RESEARCH begins broad research based on the submitted business context. The agent crawls the company website, analyzes publicly available information, and gathers market intelligence. The Connection Log shows elapsed time counters (10s to 120s) as research progresses.

[AGENT: RESEARCH] → Conducting initial research... (10s elapsed)

[STATUS] → Research in progress...

[AGENT: RESEARCH] → Conducting initial research... (120s elapsed)

Phase 3: Subtopic Research

The research agent decomposes the broad analysis into specific subtopics and investigates each in parallel. The Connection Log displays a raw JSON-formatted agent message with the research agent's identifier, followed by messages for each subtopic.

Typical subtopics observed for a cloud services company:

- Company's Market Positioning in the cloud/AI ecosystem

- Priority AI Automation Use Cases with quantified ROI potential

- Platform Enhancement Opportunities with competitive differentiation

- Implementation Framework and Guidelines for enterprise adoption

AGENT: WRITER generates both reports in parallel:

- The AI Discovery Report using a structured template with decision matrices, architecture diagrams, scoring tables, and roadmap phases

- The Deep Research Report as a narrative document with sections, data tables, and citations covering competitive positioning, industry opportunities, platform strategy, and strategic roadmap

Phase 4: Report Writing

The writer agent generates comprehensive reports from the research data.

Steps:

- Research data is processed and structured

- AGENT: WRITER generates both reports:

- AI Discovery Report (structured format)

- Deep Research Report (narrative format)

- Each report has distinct content types with specific formatting

- Elapsed time counters track writing progress

- Character counts are tracked internally

AI Discovery Report Output

- Format: Structured template with sections, tables, matrices

- Content: Decision matrices, architecture diagrams, scoring tables, roadmap phases

Deep Research Report Output

- Format: Narrative document with sections, citations, data tables

- Content: Competitive positioning, industry opportunities, platform strategy, strategic roadmap

[AGENT: WRITER] → Generating AI Discovery Report... (12s)

[AGENT: WRITER] → Writing decision matrices and scoring tables... (48s)

[AGENT: WRITER] → Composing Deep Research Report... (60s)

[AGENT: WRITER] → Writing research narrative and analysis... (80s)

[AGENT: WRITER] → Finalizing report sections... (96s)

The dual publisher agent publishes both reports and confirms completion with exact character counts.

Steps:

- AGENT: DUAL_PUBLISHER receives completed reports from AGENT: WRITER

- Reports are validated for completeness and format

- Exact character counts are calculated and logged

- Reports are published to the artifact system

- System transitions to use case generation mode

[AGENT: DUAL_PUBLISHER] → Publishing AI Discovery Report...

[AGENT: DUAL_PUBLISHER] → Publishing Deep Research Report...

[AGENT: DUAL_PUBLISHER] → Reports published (standard: 55,867 chars, structured: 24,087 chars)

[STATUS] → Multi-agent research completed — preparing for use case generation

[STEP: REVIEW] → Initiating use case generation from research data...

The system streams real-time use case generation based on the completed research.

Steps:

- Research data is processed for use case extraction

- AI identifies priority automation opportunities

- Each use case is generated with detailed metadata

- ROI calculations are performed for each use case

- Alignment scores are calculated based on business goals

- Use cases are prioritized by impact and feasibility

Real-Time Display

- Counter Badge: Increments live ("3 use cases generated" to "6 use cases generated" to "12 use cases generated")

- JSON Preview: Displays each use case as it's created

- "View Analysis..." Button: Appears when generation completes

Each Use Case Includes

| Field | Description |

|---|---|

| Title | Descriptive name (e.g., "Automated Security Threat Detection") |

| Description | Detailed explanation of the use case |

| estimated_roi | Quantified ROI percentage or dollar value |

| alignment_score | 0-100 score based on strategic fit |

| implementation_timeline | Estimated months to deploy |

| business_value | High / Medium / Low classification |

| technical_complexity | Complexity rating |

| required_capabilities | List of needed technologies and skills |

[STATUS] → Generating use cases... (3 generated)

[STATUS] → Generating use cases... (6 generated)

[STATUS] → Generating use cases... (9 generated)

[STATUS] → Generating use cases... (12 generated)

[STATUS] → Use case generation complete

Typical output: 10-12 use cases with full metadata.

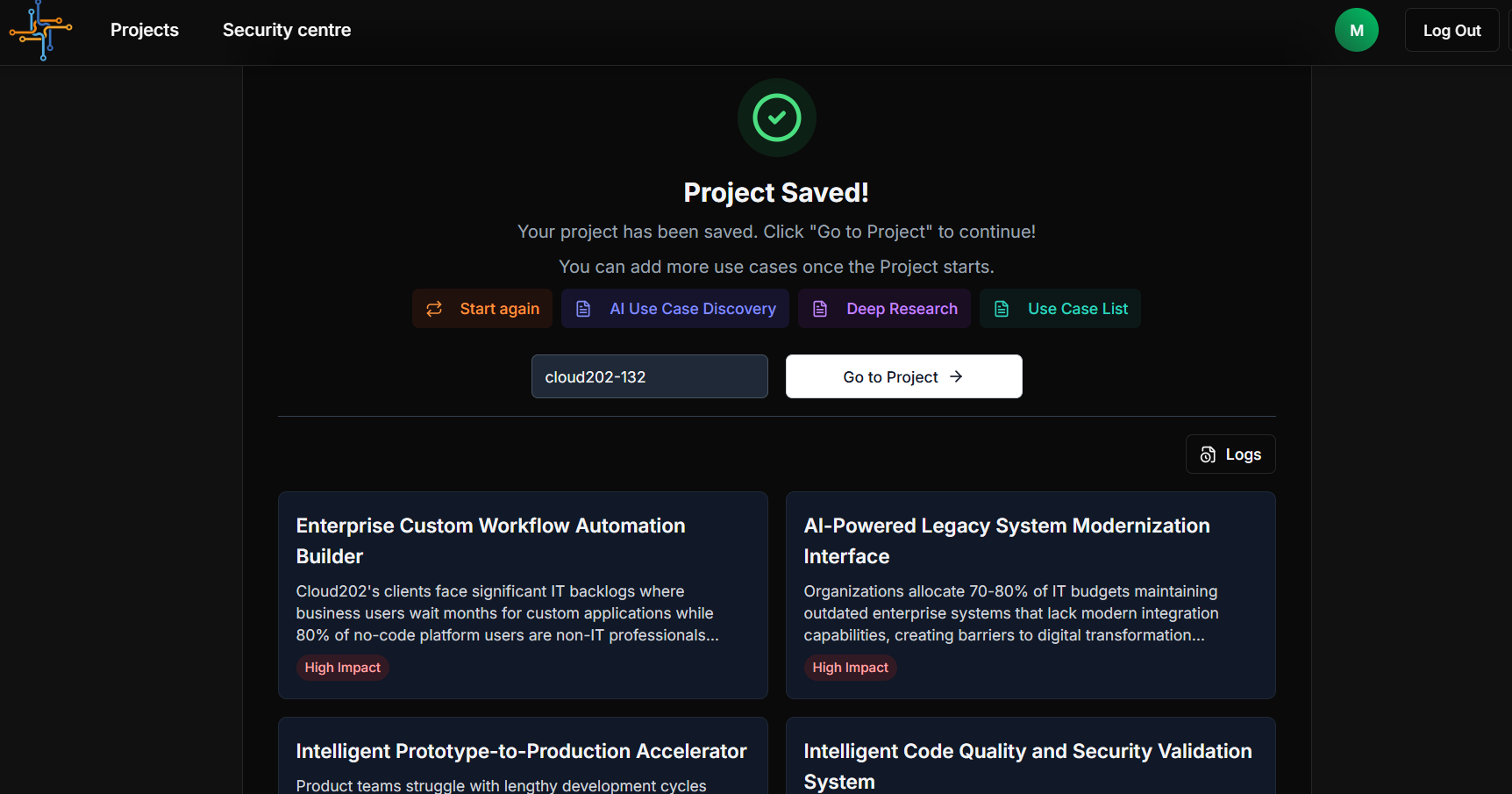

Analysis modal closes and the Results Dashboard loads with the completed project.

Steps:

- Analysis modal transitions to success state

- Green checkmark icon appears

- Status updates: "Saving Your Project..." to "Project Saved"

- Auto-generated Project ID is created and displayed

- Project ID becomes editable in input field

- Four report tabs appear below success indicator

- Use case grid populates with all generated use cases

Success State Components

| Component | Details |

|---|---|

| Success Icon | Green checkmark |

| Status Text | "Saving Your Project..." to "Project Saved" |

| Project ID | Auto-generated format: company-slug-numeric-id (e.g., cloud202-897) |

| Edit Field | Editable input for renaming project |

| Save Button | "Saving..." spinner to "Saved" confirmation |

| Helper Text | "You can add more use cases once the Project starts." |

Report Tabs

| Tab | Icon | Action | Destination |

|---|---|---|---|

| Start again | Refresh (green) | Reset form for new analysis | Use Case Tool form (blank) |

| AI Use Case Discovery | Document | Open AI Discovery Report | Artifact viewer with PDF + AI chat |

| Deep Research | Document | Open Deep Research Report | Artifact viewer with PDF + AI chat |

| Use Case List | Document | Open Use Case List | Artifact viewer with PDF + AI chat |

Use Case Grid

- Layout: Two-column card grid

- Card Content: Title, truncated description, impact badge

- Impact Badges:

- High Impact (red badge)

- Medium Impact (yellow badge)

- Low Impact (blue badge, if applicable)

- Interaction: Click any card to view full use case details

Each entry follows: [AGENT_TYPE: SUBTYPE] → Message... (elapsed time)

Color coding:

| Agent Type | Color |

|---|---|

| SYSTEM | White |

| AGENT: RESEARCH | Blue |

| AGENT: WRITER | Purple |

| AGENT: DUAL_PUBLISHER | Green |

| STEP: REVIEW | Yellow |

Error Handling & Recovery

If the pipeline encounters an error during execution:

- The Connection Log displays the error message

- The timer pauses

- The Cancel button remains available to abort

- If using "Run in Background," the project is saved with partial results where possible

To retry after an error, use the "Start again" tab on the Results Dashboard to re-submit the form.